Artificial Intelligence

With its unique mandate, UNESCO has led the international effort to ensure that science and technology develop with strong ethical guardrails for decades.

Be it on genetic research, climate change, or scientific research, UNESCO has delivered global standards to maximize the benefits of the scientific discoveries, while minimizing the downside risks, ensuring they contribute to a more inclusive, sustainable, and peaceful world. It has also identified frontier challenges in areas such as the ethics of neurotechnology, on climate engineering, and the internet of things.

These general-purpose technologies are re-shaping the way we work, interact, and live. The world is set to change at a pace not seen since the deployment of the printing press six centuries ago. AI technology brings major benefits in many areas, but without the ethical guardrails, it risks reproducing real world biases and discrimination, fueling divisions and threatening fundamental human rights and freedoms. AI business models are highly concentrated in just few countries and a handful of firms — usually developed in male-dominated teams, without the cultural diversity that characterizes our world. Contrast this with the fact that half of the world’s population still can’t count on a stable internet connection.

To correct this, under the leadership of UNESCO’s Director-General Audrey Azoulay, and a clear mandate by our Member States, UNESCO elaborated the world’s most comprehensive international framework to shape the development and use of AI technologies. The Recommendation on the Ethics of Artificial Intelligence was adopted by acclamation by 193 Member States at UNESCO’s General Conference in November 2021. This comprehensive instrument was two years in the making and the product of the broadest global consultation process of expert, developers, and other stakeholders from all around the world.

Four core values of the Recommendation

A Human Rights Approach to AI

"There is an urgent need to rebalance the situation for women in AI to avoid biased analyzes and to build technologies that take into account the expectations and needs of all of humanity".

Latest about AI

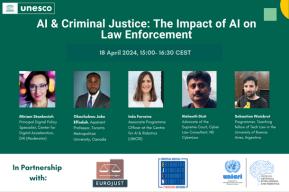

Events